How We Built an AI-First Operating System for Water Finance

Andre Paris

2026-05-04 · 12 min read

Water is the most mispriced risk in corporate finance. Trillions of dollars in infrastructure, agriculture, and industrial assets depend on water availability — yet most organizations still manage water risk through spreadsheets, consultant reports, and manual assessments that take months to produce.

Celeste is a financial AI platform that empowers global sustainability, finance, and risk teams to quantify the cost of inaction on water, evaluate the ROI of resilience strategies, and mobilize capital to strengthen supply chains and the basins they depend on. Turning water complexity into clear investment decisions requires processing vast amounts of hydrological, financial, and regulatory data — the kind of problem that demands AI-native infrastructure, not bolted-on tools.

But building that infrastructure with a small, dedicated team meant we faced a structural challenge: the same team building a platform to solve water finance at scale was itself using AI in a scattered, ungoverned way. Different tools for different people, no shared learning, and no compounding.

The cost of that disorganization was concrete:

- Data leakage — proprietary financial models and prospect data being fed into unmanaged personal accounts

- Wasted effort — everyone discovering the same prompt tricks independently, nobody sharing what worked

- No compounding — every AI interaction was disposable. Nothing we learned stuck to the organization.

The question wasn't whether to adopt AI — we're building a financial AI platform. The question was whether to let our own AI usage happen randomly or build a system that compounds what we learn.

Why Water Finance Needs an AI-First Approach

The natural instinct is to pick the best tool for each job. Copilot for code, ChatGPT for general tasks, Perplexity for research, Apollo for sales. The problem is that for a focused team tackling a complex domain, every additional tool costs more in overhead than it saves in capability.

Each new vendor means another account to manage, another data handling policy, another set of conventions to document, another surface where proprietary financial data could leak. When you're handling sensitive water risk models and investment analyses, the marginal benefit of a second AI tool doesn't justify the cost of fragmentation.

We chose Claude as the only approved AI platform. Claude Code for engineering. Claude Team workspace for everyone else.

Why Claude specifically?

- MCP (Model Context Protocol) — Claude Code has native MCP support, which lets us build enforcement directly into the AI tool engineers use every day. No other coding assistant offered this.

- Projects — Claude Team gives non-engineering team members AI workspaces with persistent context, so product, sales, and operations all work within the same ecosystem.

- One relationship — one billing line, one set of capabilities to learn deeply rather than five tools learned shallowly.

The default answer at Celeste is: "solve it with Claude first." If Claude genuinely can't handle something, we evaluate alternatives — but the bar is high.

Three Layers of AI Context

Choosing a single platform isn't enough. The harder question is: how do you make AI actually useful across a whole company? The answer is context — giving the AI the rules, conventions, and knowledge it needs to be helpful, not just capable.

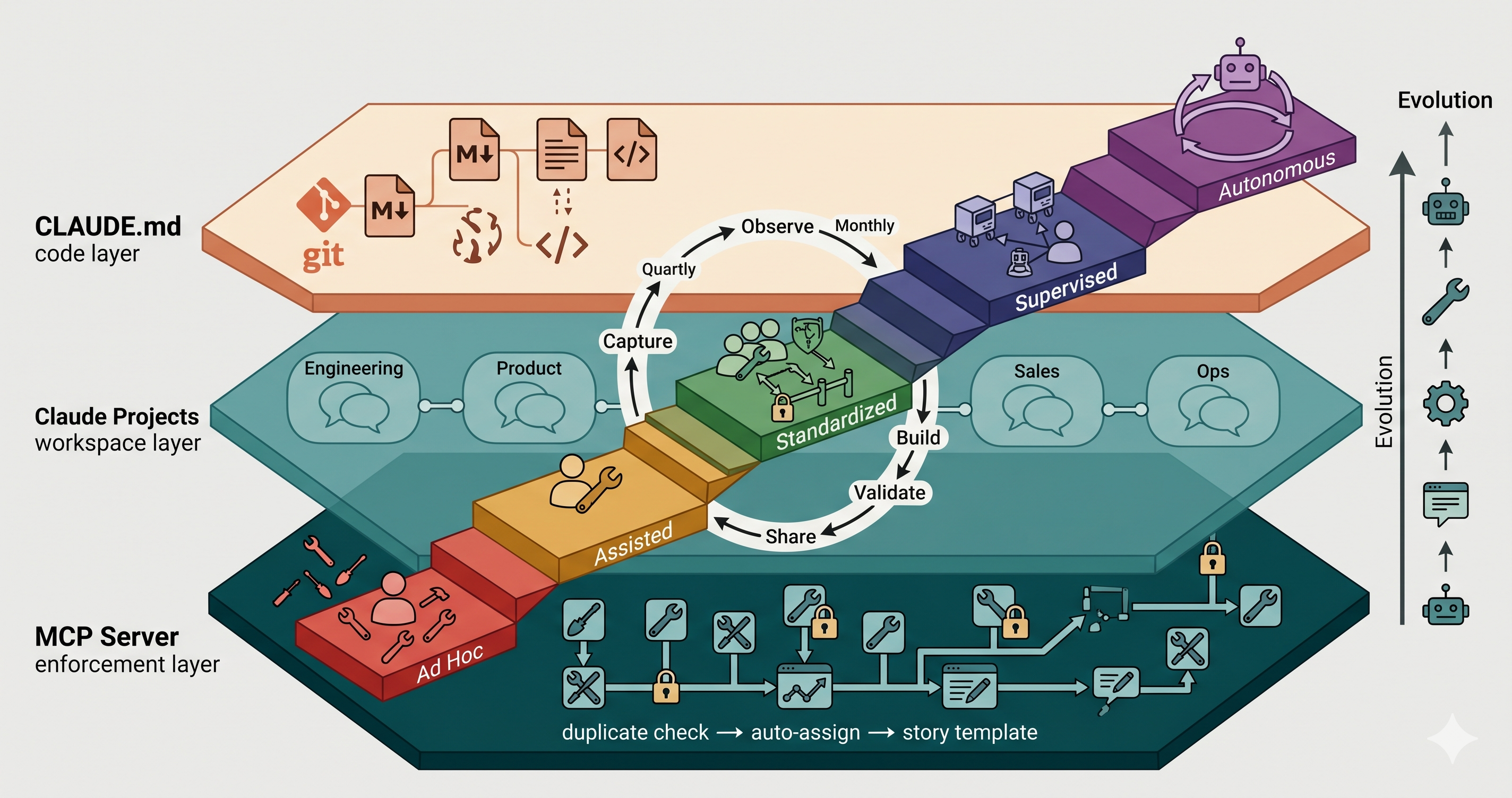

We built three complementary layers, each serving a different purpose:

Layer 1: CLAUDE.md — Engineering Context in Git

A markdown file checked into our Git repository, automatically loaded by Claude Code every time someone works in the codebase. It contains architecture conventions, multi-tenant rules, ClickUp workflows, and code patterns. It's version-controlled alongside the code it describes.

Key idea: Context that changes with the code lives with the code.Layer 2: Claude Projects — AI Workspaces for All Departments

Project-level system prompts in the Claude Team workspace, configured per department. Product gets spec templates and prioritization frameworks. Sales gets outbound playbooks. No Git knowledge required — the web interface is accessible to everyone.

Key idea: Non-technical teams need AI context too. Meet them where they already work.Layer 3: MCP Server — Enforcement Through Tooling

Our custom MCP server (celeste-mcp-server) deployed on AWS Lambda with 13 tools. It enforces business rules through tooling — duplicate detection before task creation, auto-assignment based on category, standardized story templates. Rules in the MCP server aren't suggestions. They're the way things work.

The key insight is that these layers serve different enforcement levels. CLAUDE.md is a suggestion — Claude follows it, but nothing prevents you from ignoring it. Projects are conventions — they shape behavior but can be overridden. The MCP server is enforcement — you physically cannot create a task without the duplicate check running.

Our 5-Stage AI Maturity Model

Most AI adoption frameworks assume you're an enterprise with hundreds of engineers. We needed something honest about where a small, focused team actually stands and practical about what "better" looks like when you're building financial infrastructure for a global problem.

Informed by AI maturity research from MIT CISR and Google's DORA program — but adapted for a small startup operating across all departments, not just engineering — we built a five-stage model:

- Stage 1 — Ad Hoc Exploration. Individuals experiment on their own, using personal accounts and general-purpose tools. No policies, no shared learning, no guardrails. The risk is high: inconsistent quality and potential data leakage through unmanaged accounts.

- Stage 2 — Assisted Work. The organization provides centralized AI tools and basic guidelines. AI supplements human work but doesn't change workflows. People know what to use, but adoption is inconsistent.

- Stage 3 — Standardized Workflows. AI is embedded into repeatable processes with automated guardrails. Teams operate with shared conventions — CLAUDE.md, MCP tools, Claude Projects. AI is how work gets done, not an optional add-on.

- Stage 4 — Supervised Automation. AI agents handle bounded tasks autonomously — running tests, creating PRs, enriching leads — with human oversight for higher-risk decisions. Agents trigger on events, not manual prompts.

- Stage 5 — End-to-End Autonomy. AI orchestrates entire workflows across systems. Issue to deployment. Prospect identification to CRM update. Humans focus on oversight, strategy, and exception handling.

Where We Are Today: The AI Adoption Scorecard

Frameworks are only useful if you're honest about where you stand. Here's our actual assessment across seven capability dimensions, department by department.

- Engineering is at Stage 3, transitioning to Stage 4. We have CLAUDE.md conventions, an MCP server with 13 tools, the

patternsskill enforcing architecture standards, and CI/CD gates that treat AI-generated code the same as human-written code. The bottleneck for Stage 4 is monitoring and escalation paths for autonomous agents. - Product is at Stage 2, moving toward Stage 3. Claude Team is used for PRD drafting and research synthesis, but we don't yet have standardized Claude Projects with product-specific conventions.

- Sales is at Stage 2. Apollo, HubSpot, and n8n are active, but the pipeline isn't fully automated. Claude Team is available but not deeply integrated into daily sales workflows.

- Operations is at Stage 1. Honest truth: no structured AI usage in place yet. Individual experimentation at best.

| Capability | Engineering | Product | Sales | Ops |

|---|---|---|---|---|

| Enablement | 3 | 2 | 2 | 1 |

| Policy & Governance | 3 | 2 | 2 | 1 |

| Validation & Quality | 3 | 2 | 2 | 1 |

| Workflow Integration | 3 | 2 | 2 | 1 |

| Automation Level | 3 | 2 | 2 | 1 |

| Context & Data Access | 3 | 2 | 2 | 1 |

| Cross-Functional | 3 | 2 | 2 | 1 |

The pattern is clear: engineering is ahead because that's where we invested first. The rest of the organization is at Stage 2 — tools are available, but workflows aren't standardized. Operations hasn't started at all. This is normal for any team scaling AI adoption. You have to lead with one department and let the infrastructure it builds benefit the rest.

Don't try to advance every department simultaneously. Lead with the department that's most ready, build reusable infrastructure, then propagate.

The Continuous Improvement Loop

AI adoption isn't a migration you finish. The tools, skills, and agents that work today will need to evolve as the team grows, the product changes, and AI capabilities advance. We built a systematic improvement loop so this evolution happens deliberately rather than by accident.

Every AI capability at Celeste — whether it's a CLAUDE.md convention, a Claude Project, an MCP tool, or an agent — follows the same lifecycle: Observe friction and missed opportunities, Capture them in shared channels, Build them into CLAUDE.md rules or MCP tools, Validate them in real work, and Share them across teams.

The cadence that makes it work

- Daily (individual) — Notice friction points. Share discoveries in #ai-tips. Flag AI outputs that were wrong.

- Biweekly (department) — Review captured friction, update Claude Projects or CLAUDE.md, demo new skills.

- Monthly (cross-functional) — Share across departments, review MCP tool usage metrics, identify workflow opportunities.

- Quarterly (organization) — Full maturity reassessment, policy review, plan the next quarter's priorities.

How AI Skills Become Autonomous Agents

AI capabilities don't start as agents. They mature through a predictable progression. Understanding this ladder helps you know what to build next — and resist the temptation to skip straight to "fully autonomous agents" before you've nailed the fundamentals.

Here's the real progression, with examples from our codebase:

- Prompt → Repeatable Prompt: Someone discovers a prompt that works well for outbound emails — context about the prospect, likely pain points, a specific Celeste capability, and a single CTA. They share it in #ai-tips. Now the team has a reusable template instead of everyone starting from scratch.

- Repeatable Prompt → Skill: The

patternsskill in Claude Code. Instead of manually reminding Claude about TypeScript conventions every session, the skill encodes them and applies them automatically during code generation. - Skill → MCP Tool: ClickUp task creation started as manual prompts ("create a task for X"), became a convention in CLAUDE.md ("always check for duplicates first"), and is now

clt__create_story— an MCP tool that enforces duplicate checking, auto-assignment, and story templates automatically. - MCP Tool → Agent: The

clt__doc_to_taskspipeline could evolve from a manually-invoked tool into an agent that watches for newly approved Google Docs and automatically proposes ClickUp tasks for review. - Agent → Orchestrated Workflow: A product spec approval triggers task creation → branch creation → code scaffolding → test setup, all orchestrated by connected agents with human checkpoints at key decision points.

Don't build agents before you've built skills. Don't build skills before you've shared prompts. Each level earns the right to the next.

What's Next for AI-Driven Water Risk Assessment

We're entering Phase 3: Automation. The goals are concrete:

- Engineering agents for bounded tasks — test execution, PR creation, staging deployment — with monitoring dashboards and escalation paths

- Sales pipeline automation — n8n workflows connecting Apollo enrichment to HubSpot updates, LinkedIn automation running on schedule

- Product workflow automation — the

clt__doc_to_taskspipeline as the standard path from approved specs to engineering tickets - Operations onboarding — Claude Team access and training for report drafting and data summarization

The meta-lesson

If we started over, we'd do it the same way: governance first, then acceleration. The natural instinct is to start with the exciting stuff — agents, automation, multi-model workflows. But without the foundation (one platform, clear policies, shared context), every agent you build is a liability rather than an asset.

Start with the boring stuff. Pick one tool. Write a policy. Share a few prompts. Build a single MCP tool. The compound interest on those early decisions is what makes the exciting stuff actually work later.

Start with governance, then accelerate. The compound interest on early decisions is what makes the exciting stuff actually work.

Andre Paris

The Celeste team writes about water risk, climate finance, and enterprise resilience strategy. We help organizations make water a financial decision.